It’s no secret that computer scientists and engineers are looking to the human brain as means of achieving the next great leap in computer evolution. Already, machines are being developed that rely on machine blood, can continue working despite being damaged, and recognize images and speech. And soon, a computer chip that is capable of learning from its mistakes will also be available.

It’s no secret that computer scientists and engineers are looking to the human brain as means of achieving the next great leap in computer evolution. Already, machines are being developed that rely on machine blood, can continue working despite being damaged, and recognize images and speech. And soon, a computer chip that is capable of learning from its mistakes will also be available.

The new computing approach, already in use by some large technology companies, is based on the biological nervous system – specifically on how neurons react to stimuli and connect with other neurons to interpret information. It allows computers to absorb new information while carrying out a task, and adjust what they do based on the changing signals.

The first commercial version of the new kind of computer chip is scheduled to be released in 2014, and was the result of a collaborative effort between I.B.M. and Qualcomm, as well as a Stanford research team. This “neuromorphic processor” can not only automate tasks that once required painstaking programming, but can also sidestep and even tolerate errors, potentially making the term “computer crash” obsolete.

The first commercial version of the new kind of computer chip is scheduled to be released in 2014, and was the result of a collaborative effort between I.B.M. and Qualcomm, as well as a Stanford research team. This “neuromorphic processor” can not only automate tasks that once required painstaking programming, but can also sidestep and even tolerate errors, potentially making the term “computer crash” obsolete.

In coming years, the approach will make possible a new generation of artificial intelligence systems that will perform some functions that humans do with ease: see, speak, listen, navigate, manipulate and control. That can hold enormous consequences for tasks like facial and speech recognition, navigation and planning, which are still in elementary stages and rely heavily on human programming.

For example, computer vision systems only “recognize” objects that can be identified by the statistics-oriented algorithms programmed into them. An algorithm is like a recipe, a set of step-by-step instructions to perform a calculation. But last year, Google researchers were able to get a machine-learning algorithm, known as a “Google Neural Network”, to perform an identification task (involving cats) without supervision.

For example, computer vision systems only “recognize” objects that can be identified by the statistics-oriented algorithms programmed into them. An algorithm is like a recipe, a set of step-by-step instructions to perform a calculation. But last year, Google researchers were able to get a machine-learning algorithm, known as a “Google Neural Network”, to perform an identification task (involving cats) without supervision.

And this past June, the company said it had used those neural network techniques to develop a new search service to help customers find specific photos more accurately. And this past November, researchers at Standford University came up with a new algorithm that could give computers the power to more reliably interpret language. It’s known as the Neural Analysis of Sentiment (NaSent).

A similar concept known as Deep Leaning is also looking to endow software with a measure of common sense. Google is using this technique with their voice recognition technology to aid in performing searches. In addition, the social media giant Facebook is looking to use deep learning to help them improve Graph Search, an engine that allows users to search activity on their network.

A similar concept known as Deep Leaning is also looking to endow software with a measure of common sense. Google is using this technique with their voice recognition technology to aid in performing searches. In addition, the social media giant Facebook is looking to use deep learning to help them improve Graph Search, an engine that allows users to search activity on their network.

Until now, the design of computers was dictated by ideas originated by the mathematician John von Neumann about 65 years ago. Microprocessors perform operations at lightning speed, following instructions programmed using long strings of binary code (0s and 1s). The information is stored separately in what is known as memory, either in the processor itself, in adjacent storage chips or in higher capacity magnetic disk drives.

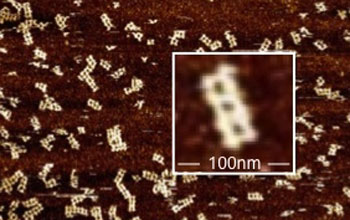

By contrast, the new processors consist of electronic components that can be connected by wires that mimic biological synapses. Because they are based on large groups of neuron-like elements, they are known as neuromorphic processors, a term credited to the California Institute of Technology physicist Carver Mead, who pioneered the concept in the late 1980s.

By contrast, the new processors consist of electronic components that can be connected by wires that mimic biological synapses. Because they are based on large groups of neuron-like elements, they are known as neuromorphic processors, a term credited to the California Institute of Technology physicist Carver Mead, who pioneered the concept in the late 1980s.

These processors are not “programmed”, in the conventional sense. Instead, the connections between the circuits are “weighted” according to correlations in data that the processor has already “learned.” Those weights are then altered as data flows in to the chip, causing them to change their values and to “spike.” This, in turn, strengthens some connections and weakens others, reacting much the same way the human brain does.

In the words of Dharmendra Modha, an I.B.M. computer scientist who leads the company’s cognitive computing research effort:

In the words of Dharmendra Modha, an I.B.M. computer scientist who leads the company’s cognitive computing research effort:

Instead of bringing data to computation as we do today, we can now bring computation to data. Sensors become the computer, and it opens up a new way to use computer chips that can be everywhere.

One great advantage of the new approach is its ability to tolerate glitches, whereas traditional computers are cannot work around the failure of even a single transistor. With the biological designs, the algorithms are ever changing, allowing the system to continuously adapt and work around failures to complete tasks. Another benefit is energy efficiency, another inspiration drawn from the human brain.

The new computers, which are still based on silicon chips, will not replace today’s computers, but augment them; at least for the foreseeable future. Many computer designers see them as coprocessors, meaning they can work in tandem with other circuits that can be embedded in smartphones and the centralized computers that run computing clouds.

The new computers, which are still based on silicon chips, will not replace today’s computers, but augment them; at least for the foreseeable future. Many computer designers see them as coprocessors, meaning they can work in tandem with other circuits that can be embedded in smartphones and the centralized computers that run computing clouds.

However, the new approach is still limited, thanks to the fact that scientists still do not fully understand how the human brain functions. As Kwabena Boahen, a computer scientist who leads Stanford’s Brains in Silicon research program, put it:

We have no clue. I’m an engineer, and I build things. There are these highfalutin theories, but give me one that will let me build something.

Luckily, there are efforts underway that are designed to remedy this, with the specific intention of directing that knowledge towards the creation of better computers and AIs. One such effort comes from the National Science Foundation financed the Center for Brains, Minds and Machines, a new research center based at the Massachusetts Institute of Technology, with Harvard and Cornell.

Luckily, there are efforts underway that are designed to remedy this, with the specific intention of directing that knowledge towards the creation of better computers and AIs. One such effort comes from the National Science Foundation financed the Center for Brains, Minds and Machines, a new research center based at the Massachusetts Institute of Technology, with Harvard and Cornell.

Another is the California Institute for Telecommunications and Information Technology (aka. Calit2) – a center dedicated to innovation in nanotechnology, life sciences, information technology, and telecommunications. As

Larry Smarr, an astrophysicist and director of Institute, put it:

We’re moving from engineering computing systems to something that has many of the characteristics of biological computing.

And last, but certainly not least, is the Human Brain Project, an international group of 200 scientists from 80 different research institutions and based in Lausanne, Switzerland. Having secured the $1.6 billion they need to fund their efforts, these researchers will spend the next ten years conducting research that cuts across multiple disciplines.

And last, but certainly not least, is the Human Brain Project, an international group of 200 scientists from 80 different research institutions and based in Lausanne, Switzerland. Having secured the $1.6 billion they need to fund their efforts, these researchers will spend the next ten years conducting research that cuts across multiple disciplines.

This initiative, which has been compared to the Large Hadron Collider, will attempt to reconstruct the human brain piece-by-piece and gradually bring these cognitive components into an overarching supercomputer. The expected result of this research will be new platforms for “neuromorphic computing” and “neurorobotics,” allowing for the creation of computing and robotic architectures that mimic the functions of the human brain.

When future generations look back on this decade, no doubt they will refer to it as the birth of the neuromophic computing revolution. Or maybe just Neuromorphic Revolution for short, but that sort of depends on the outcome. With so many technological revolutions well underway, it is difficult to imagine how the future will look back and characterize this time.

When future generations look back on this decade, no doubt they will refer to it as the birth of the neuromophic computing revolution. Or maybe just Neuromorphic Revolution for short, but that sort of depends on the outcome. With so many technological revolutions well underway, it is difficult to imagine how the future will look back and characterize this time.

Perhaps, as Charles Stross suggest, it will simply be known as “the teens”, that time in pre-Singularity history where it was all starting to come together, but was yet to explode and violently change everything we know. I for one am looking forward to being around to witness it all!

Sources: nytimes.com, technologyreview.com, calit2.net, humanbrainproject.eu

According to a story published on July 10 in The Astrophysical Journal, a radio burst was detected that may have originated outside of our galaxy. Apparently, these split-second radio bursts have heard before, but always with the same telescope – Parkes Observatory in Australia. Given that only this observatory was detecting these signals, there was debate about whether they were coming from inside our galaxy, or even from Earth itself.

According to a story published on July 10 in The Astrophysical Journal, a radio burst was detected that may have originated outside of our galaxy. Apparently, these split-second radio bursts have heard before, but always with the same telescope – Parkes Observatory in Australia. Given that only this observatory was detecting these signals, there was debate about whether they were coming from inside our galaxy, or even from Earth itself. Fast radio bursts are a flurry of radio waves that last a few thousandths of a second, and at any given minute there are only seven of these in the sky on average, according to the Max Planck Institute for Radio Astronomy. Their cause is unknown, and the possibilities range from black holes, to neutron stars coming together, to the magnetic field of pulsars (a type of neutron star) flaring up.

Fast radio bursts are a flurry of radio waves that last a few thousandths of a second, and at any given minute there are only seven of these in the sky on average, according to the Max Planck Institute for Radio Astronomy. Their cause is unknown, and the possibilities range from black holes, to neutron stars coming together, to the magnetic field of pulsars (a type of neutron star) flaring up. This astonishingly large number is inferred by calculating how much sky was observed, and for how long, in order to make the few detections that have so far been reported. Laura Spitler, a postdoctoral researcher at the Max Planck Institute for Radio Astronomy in Bonn, Germany and the lead author of the new paper, was also the first person to note the event. As she explained:

This astonishingly large number is inferred by calculating how much sky was observed, and for how long, in order to make the few detections that have so far been reported. Laura Spitler, a postdoctoral researcher at the Max Planck Institute for Radio Astronomy in Bonn, Germany and the lead author of the new paper, was also the first person to note the event. As she explained: Efforts are now under way to detect radio bursts using radio telescopes that can observe broad swaths of the sky to help identify them. Telescopes under construction in Australia and South Africa as well as the CHIME telescope in Canada have the potential to detect fast radio bursts. Astronomers say these and other new facilities could pave the way for many more discoveries and a better understanding of this mysterious cosmic phenomenon.

Efforts are now under way to detect radio bursts using radio telescopes that can observe broad swaths of the sky to help identify them. Telescopes under construction in Australia and South Africa as well as the CHIME telescope in Canada have the potential to detect fast radio bursts. Astronomers say these and other new facilities could pave the way for many more discoveries and a better understanding of this mysterious cosmic phenomenon.