Welcome to the world of tomorroooooow! Or more precisely, to many possible scenarios that humanity could face as it steps into the future. Perhaps it’s been all this talk of late about the future of humanity, how space exploration and colonization may be the only way to ensure our survival. Or it could be I’m just recalling what a friend of mine – Chris A. Jackson – wrote with his “Flash in the Pan” piece – a short that consequently inspired me to write the novel Source.

Welcome to the world of tomorroooooow! Or more precisely, to many possible scenarios that humanity could face as it steps into the future. Perhaps it’s been all this talk of late about the future of humanity, how space exploration and colonization may be the only way to ensure our survival. Or it could be I’m just recalling what a friend of mine – Chris A. Jackson – wrote with his “Flash in the Pan” piece – a short that consequently inspired me to write the novel Source.

Either way, I’ve been thinking about the likely future scenarios and thought I should include it alongside the Timeline of the Future. After all, once cannot predict the course of the future as much as predict possible outcomes and paths, and trust that the one they believe in the most will come true. So, borrowing from the same format Chris used, here are a few potential fates, listed from worst to best – or least to most advanced.

1. Humanrien:

Due to the runaway effects of Climate Change during the 21st/22nd centuries, the Earth is now a desolate shadow of its once-great self. Humanity is non-existent, as are many other species of mammals, avians, reptiles, and insects. And it is predicted that the process will continue into the foreseeable future, until such time as the atmosphere becomes a poisoned, sulfuric vapor and the ground nothing more than windswept ashes and molten metal.

Due to the runaway effects of Climate Change during the 21st/22nd centuries, the Earth is now a desolate shadow of its once-great self. Humanity is non-existent, as are many other species of mammals, avians, reptiles, and insects. And it is predicted that the process will continue into the foreseeable future, until such time as the atmosphere becomes a poisoned, sulfuric vapor and the ground nothing more than windswept ashes and molten metal.

One thing is clear though: the Earth will never recover, and humanity’s failure to seed other planets with life and maintain a sustainable existence on Earth has led to its extinction. The universe shrugs and carries on…

2. Post-Apocalyptic:

Whether it is due to nuclear war, a bio-engineered plague, or some kind of “nanocaust”, civilization as we know it has come to an end. All major cities lie in ruin and are populated only marauders and street gangs, the more peaceful-minded people having fled to the countryside long ago. In scattered locations along major rivers, coastlines, or within small pockets of land, tiny communities have formed and eke out an existence from the surrounding countryside.

Whether it is due to nuclear war, a bio-engineered plague, or some kind of “nanocaust”, civilization as we know it has come to an end. All major cities lie in ruin and are populated only marauders and street gangs, the more peaceful-minded people having fled to the countryside long ago. In scattered locations along major rivers, coastlines, or within small pockets of land, tiny communities have formed and eke out an existence from the surrounding countryside.

At this point, it is unclear if humanity will recover or remain at the level of a pre-industrial civilization forever. One thing seems clear, that humanity will not go extinct just yet. With so many pockets spread across the entire planet, no single fate could claim all of them anytime soon. At least, one can hope that it won’t.

3. Dog Days:

The world continues to endure recession as resource shortages, high food prices, and diminishing space for real estate continue to plague the global economy. Fuel prices remain high, and opposition to new drilling and oil and natural gas extraction are being blamed. Add to that the crushing burdens of displacement and flooding that is costing governments billions of dollars a year, and you have life as we know it.

The world continues to endure recession as resource shortages, high food prices, and diminishing space for real estate continue to plague the global economy. Fuel prices remain high, and opposition to new drilling and oil and natural gas extraction are being blamed. Add to that the crushing burdens of displacement and flooding that is costing governments billions of dollars a year, and you have life as we know it.

The smart money appears to be in offshore real-estate, where Lillypad cities and Arcologies are being built along the coastlines of the world. Already, habitats have been built in Boston, New York, New Orleans, Tokyo, Shanghai, Hong Kong and the south of France, and more are expected in the coming years. These are the most promising solution of what to do about the constant flooding and damage being caused by rising tides and increased coastal storms.

In these largely self-contained cities, those who can afford space intend to wait out the worst. It is expected that by the mid-point of the 22nd century, virtually all major ocean-front cities will be abandoned and those that sit on major waterways will be protected by huge levies. Farmland will also be virtually non-existent except within the Polar Belts, which means the people living in the most populous regions of the world will either have to migrate or die.

No one knows how the world’s 9 billion will endure in that time, but for the roughly 100 million living at sea, it’s not a going concern.

4. Technological Plateau:

Computers have reached a threshold of speed and processing power. Despite the discovery of graphene, the use of optical components, and the development of quantum computing/internet principles, it now seems that machines are as smart as they will ever be. That is to say, they are only slightly more intelligent than humans, and still can’t seem to beat the Turing Test with any consistency.

Computers have reached a threshold of speed and processing power. Despite the discovery of graphene, the use of optical components, and the development of quantum computing/internet principles, it now seems that machines are as smart as they will ever be. That is to say, they are only slightly more intelligent than humans, and still can’t seem to beat the Turing Test with any consistency.

It seems the long awaited-for explosion in learning and intelligence predicted by Von Neumann, Kurzweil and Vinge seems to have fallen flat. That being said, life is getting better. With all the advances turned towards finding solutions to humanity’s problems, alternative energy, medicine, cybernetics and space exploration are still growing apace; just not as fast or awesomely as people in the previous century had hoped.

Missions to Mars have been mounted, but a colony on that world is still a long ways away. A settlement on the Moon has been built, but mainly to monitor the research and solar energy concerns that exist there. And the problem of global food shortages and CO2 emissions is steadily declining. It seems that the words “sane planning, sensible tomorrow” have come to characterize humanity’s existence. Which is good… not great, but good.

Humanity’s greatest expectations may have yielded some disappointment, but everyone agrees that things could have been a hell of a lot worse!

5. The Green Revolution:

The global population has reached 10 billion. But the good news is, its been that way for several decades. Thanks to smart housing, hydroponics and urban farms, hunger and malnutrition have been eliminated. The needs of the Earth’s people are also being met by a combination of wind, solar, tidal, geothermal and fusion power. And though space is not exactly at a premium, there is little want for housing anymore.

The global population has reached 10 billion. But the good news is, its been that way for several decades. Thanks to smart housing, hydroponics and urban farms, hunger and malnutrition have been eliminated. The needs of the Earth’s people are also being met by a combination of wind, solar, tidal, geothermal and fusion power. And though space is not exactly at a premium, there is little want for housing anymore.

Additive manufacturing, biomanufacturing and nanomanufacturing have all led to an explosion in how public spaces are built and administered. Though it has led to the elimination of human construction and skilled labor, the process is much safer, cleaner, efficient, and has ensured that anything built within the past half-century is harmonious with the surrounding environment.

This explosion is geological engineering is due in part to settlement efforts on Mars and the terraforming of Venus. Building a liveable environment on one and transforming the acidic atmosphere on the other have helped humanity to test key technologies and processes used to end global warming and rehabilitate the seas and soil here on Earth. Over 100,000 people now call themselves “Martian”, and an additional 10,000 Venusians are expected before long.

Colonization is an especially attractive prospect for those who feel that Earth is too crowded, too conservative, and lacking in personal space…

6. Intrepid Explorers:

Humanity has successfully colonized Mars, Venus, and is busy settling the many moons of the outer Solar System. Current population statistics indicate that over 50 billion people now live on a dozen worlds, and many are feeling the itch for adventure. With deep-space exploration now practical, thanks to the development of the Alcubierre Warp Drive, many missions have been mounted to explore and colonizing neighboring star systems.

Humanity has successfully colonized Mars, Venus, and is busy settling the many moons of the outer Solar System. Current population statistics indicate that over 50 billion people now live on a dozen worlds, and many are feeling the itch for adventure. With deep-space exploration now practical, thanks to the development of the Alcubierre Warp Drive, many missions have been mounted to explore and colonizing neighboring star systems.

These include Earth’s immediate neighbor, Alpha Centauri, but also the viable star systems of Tau Ceti, Kapteyn, Gliese 581, Kepler 62, HD 85512, and many more. With so many Earth-like, potentially habitable planets in the near-universe and now within our reach, nothing seems to stand between us and the dream of an interstellar human race. Mission to find extra-terrestrial intelligence are even being plotted.

This is one prospect humanity both anticipates and fears. While it is clear that no sentient life exists within the local group of star systems, our exploration of the cosmos has just begun. And if our ongoing scientific surveys have proven anything, it is that the conditions for life exist within many star systems and on many worlds. No telling when we might find one that has produced life of comparable complexity to our own, but time will tell.

One can only imagine what they will look like. One can only imagine if they are more or less advanced than us. And most importantly, one can only hope that they will be friendly…

7. Post-Humanity:

Cybernetics, biotechnology, and nanotechnology have led to an era of enhancement where virtually every human being has evolved beyond its biological limitations. Advanced medicine, digital sentience and cryonics have prolonged life indefinitely, and when someone is facing death, they can preserve their neural patterns or their brain for all time by simply uploading or placing it into stasis.

Cybernetics, biotechnology, and nanotechnology have led to an era of enhancement where virtually every human being has evolved beyond its biological limitations. Advanced medicine, digital sentience and cryonics have prolonged life indefinitely, and when someone is facing death, they can preserve their neural patterns or their brain for all time by simply uploading or placing it into stasis.

Both of these options have made deep-space exploration a reality. Preserved human beings launch themselves towards expoplanets, while the neural uploads of explorers spend decades or even centuries traveling between solar systems aboard tiny spaceships. Space penetrators are fired in all directions to telexplore the most distant worlds, with the information being beamed back to Earth via quantum communications.

It is an age of posts – post-scarcity, post-mortality, and post-humansim. Despite the existence of two billion organics who have minimal enhancement, there appears to be no stopping the trend. And with the breakneck pace at which life moves around them, it is expected that the unenhanced – “organics” as they are often known – will migrate outward to Europa, Ganymede, Titan, Oberon, and the many space habitats that dot the outer Solar System.

Presumably, they will mount their own space exploration in the coming decades to find new homes abroad in interstellar space, where their kind can expect not to be swept aside by the unstoppable tide of progress.

8. Star Children:

Earth is no more. The Sun is now a mottled, of its old self. Surrounding by many layers of computronium, our parent star has gone from being the source of all light and energy in our solar system to the energy source that powers the giant Dyson Swarm at the center of our universe. Within this giant Matrioshka Brain, trillions of human minds live out an existence as quantum-state neural patterns, living indefinitely in simulated realities.

Earth is no more. The Sun is now a mottled, of its old self. Surrounding by many layers of computronium, our parent star has gone from being the source of all light and energy in our solar system to the energy source that powers the giant Dyson Swarm at the center of our universe. Within this giant Matrioshka Brain, trillions of human minds live out an existence as quantum-state neural patterns, living indefinitely in simulated realities.

Within the outer Solar System and beyond lie billions more, enhanced trans and post-humans who have opted for an “Earthly” existence amongst the planets and stars. However, life seems somewhat limited out in those parts, very rustic compared to the infinite bandwidth and computational power of inner Solar System. And with this strange dichotomy upon them, the human race suspects that it might have solved the Fermi Paradox.

If other sentient life can be expected to have followed a similar pattern of technological development as the human race, then surely they too have evolved to the point where the majority of their species lives in Dyson Swarms around their parent Sun. Venturing beyond holds little appeal, as it means moving away from the source of bandwidth and becoming isolated. Hopefully, enough of them are adventurous enough to meet humanity partway…

_____

Which will come true? Who’s to say? Whether its apocalyptic destruction or runaway technological evolution, cataclysmic change is expected and could very well threaten our existence. Personally, I’m hoping for something in the scenario 5 and/or 6 range. It would be nice to know that both humanity and the world it originated from will survive the coming centuries!

In so doing, he demonstrated that the nervous system was like a computer terminal through which you could deliver commands to stop a problem, like acute inflammation, before it starts, or repair a body after it gets sick. His work also seemed to indicate that electricity delivered to the vagus nerve in just the right intensity and at precise intervals could reproduce a drug’s therapeutic reaction, but with greater effectiveness, minimal health risks, and at a fraction of the cost of “biologic” pharmaceuticals.

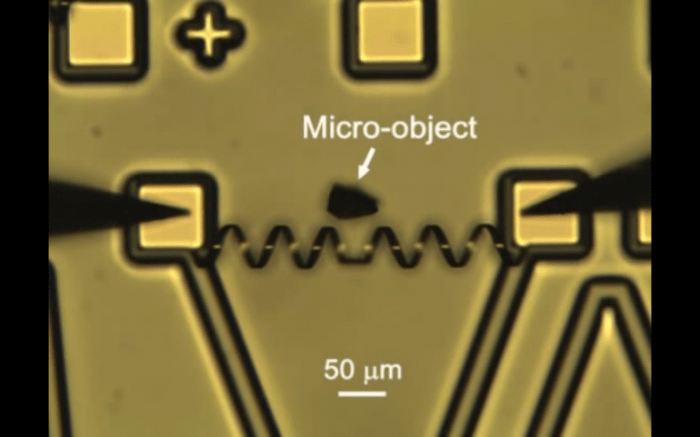

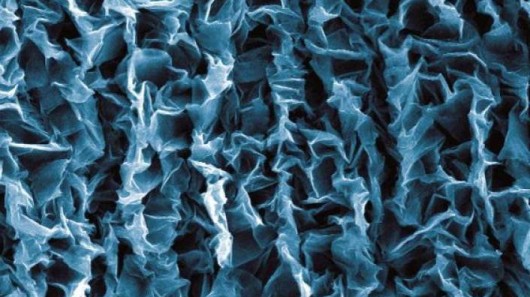

In so doing, he demonstrated that the nervous system was like a computer terminal through which you could deliver commands to stop a problem, like acute inflammation, before it starts, or repair a body after it gets sick. His work also seemed to indicate that electricity delivered to the vagus nerve in just the right intensity and at precise intervals could reproduce a drug’s therapeutic reaction, but with greater effectiveness, minimal health risks, and at a fraction of the cost of “biologic” pharmaceuticals. Impressive as this may seem, bioelectronics are just part of the growing discussion about neurohacking. In addition to the leaps and bounds being made in the field of brain-to-computer interfacing (and brain-to-brain interfacing), that would allow people to control machinery and share thoughts across vast distances, there is also a field of neurosurgery that is seeking to use the miracle material of grap

Impressive as this may seem, bioelectronics are just part of the growing discussion about neurohacking. In addition to the leaps and bounds being made in the field of brain-to-computer interfacing (and brain-to-brain interfacing), that would allow people to control machinery and share thoughts across vast distances, there is also a field of neurosurgery that is seeking to use the miracle material of grap

In the end, neuromorphic chips and technology are merely one half of the equation. In the grand scheme of things, the aim of all of this research is not only produce technology that can ensure better biology, but technology inspired by biology to create better machinery. The end result of this, according to some, is a world in which biology and technology increasingly resemble each other, to the point that they is barely a distinction to be made and they can be merged.

In the end, neuromorphic chips and technology are merely one half of the equation. In the grand scheme of things, the aim of all of this research is not only produce technology that can ensure better biology, but technology inspired by biology to create better machinery. The end result of this, according to some, is a world in which biology and technology increasingly resemble each other, to the point that they is barely a distinction to be made and they can be merged.