It’s been a long while since I did a book review, mainly because I’ve been immersed in my writing. But sooner or later, you have to return to the source, right? As usual, I’ve been reading books that I hope will help me expand my horizons and become a better writer. And with that in mind, I thought I’d finally review a book I finished reading some months ago, one which was I read in the hopes of learning my craft.

It’s been a long while since I did a book review, mainly because I’ve been immersed in my writing. But sooner or later, you have to return to the source, right? As usual, I’ve been reading books that I hope will help me expand my horizons and become a better writer. And with that in mind, I thought I’d finally review a book I finished reading some months ago, one which was I read in the hopes of learning my craft.

It’s called Accelerando, one of Charle’s Stross better known works that earned him the Hugo, Campbell, Clarke, and British Science Fiction Association Awards. The book contains nine short stories, all of which were originally published as novellas and novelettes in Azimov’s Science Fiction. Each one revolves around the Mancx family, looking at three generations that live before, during, and after the technological singularity.

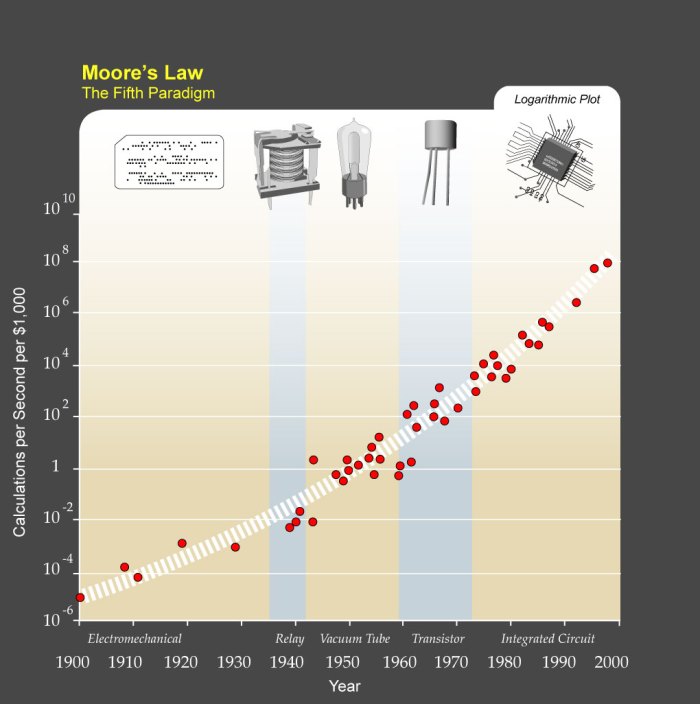

This is the central focus of the story – and Stross’ particular obsession – which he explores in serious depth. The title, which in Italian means “speeding up” and is used as a tempo marking in musical notation, refers to the accelerating rate of technological progress and its impact on humanity. Beginning in the 21st century with the character of Manfred Mancx, a “venture altruist”; moving to his daughter Amber in the mid 21st century; the story culminates with Sirhan al-Khurasani, Amber’s son in the late 21st century and distant future.

This is the central focus of the story – and Stross’ particular obsession – which he explores in serious depth. The title, which in Italian means “speeding up” and is used as a tempo marking in musical notation, refers to the accelerating rate of technological progress and its impact on humanity. Beginning in the 21st century with the character of Manfred Mancx, a “venture altruist”; moving to his daughter Amber in the mid 21st century; the story culminates with Sirhan al-Khurasani, Amber’s son in the late 21st century and distant future.

In the course of all that, the story looks at such high-minded concepts as nanotechnology, utility fogs, clinical immortality, Matrioshka Brains, extra-terrestrials, FTL, Dyson Spheres and Dyson Swarms, and the Fermi Paradox. It also takes a long-view of emerging technologies and predicts where they will take us down the road.

And to quote Cory Doctorw’s own review of the book, it essentially “Makes hallucinogens obsolete.”

Plot Synopsis:

Part I, Slow Takeoff, begins with the short story “Lobsters“, which opens in early-21st century Amsterdam. Here, we see Manfred Macx, a “venture altruist”, going about his business, making business ideas happen for others and promoting development. In the course of things, Manfred receives a call on a courier-delivered phone from entities claiming to be a net-based AI working through a KGB website, seeking his help on how to defect.

Part I, Slow Takeoff, begins with the short story “Lobsters“, which opens in early-21st century Amsterdam. Here, we see Manfred Macx, a “venture altruist”, going about his business, making business ideas happen for others and promoting development. In the course of things, Manfred receives a call on a courier-delivered phone from entities claiming to be a net-based AI working through a KGB website, seeking his help on how to defect.

Eventually, he discovers the callers are actually uploaded brain-scans of the California spiny lobster looking to escape from humanity’s interference. This leads Macx to team up with his friend, entrepreneur Bob Franklin, who is looking for an AI to crew his nascent spacefaring project—the building of a self-replicating factory complex from cometary material.

In the course of securing them passage aboard Franklin’s ship, a new legal precedent is established that will help define the rights of future AIs and uploaded minds. Meanwhile, Macx’s ex-fiancee Pamela pursues him, seeking to get him to declare his assets as part of her job with the IRS and her disdain for her husband’s post-scarcity economic outlook. Eventually, she catches up to him and forces him to impregnate and marry her in an attempt to control him.

The second story, “Troubador“, takes place three years later where Manfred is in the middle of an acrimonious divorce with Pamela who is once again seeking to force him to declare his assets. Their daughter, Amber, is frozen as a newly fertilized embryo and Pamela wants to raise her in a way that would be consistent with her religious beliefs and not Manfred’s extropian views. Meanwhile, he is working on three new schemes and looking for help to make them a reality.

These include a workable state-centralized planning apparatus that can interface with external market systems, a way to upload the entirety of the 20th century’s out-of-copyright film and music to the net. He meets up with Annette again – a woman working for Arianspace, a French commercial aerospace company – and the two begin a relationship. With her help, his schemes come together perfectly and he is able to thwart his wife and her lawyers. However, their daughter Amber is then defrosted and born, and henceforth is being raised by Pamela.

The third and final story in Part I is “Tourist“, which takes place five years later in Edinburgh. During this story, Manfred is mugged and his memories (stored in a series of Turing-compatible cyberware) are stolen. The criminal tries to use Manfred’s memories and glasses to make some money, but is horrified when he learns all of his plans are being made available free of charge. This forces Annabelle to go out and find the man who did it and cut a deal to get his memories back.

Meanwhile, the Lobsters are thriving in colonies situated at the L5 point, and on a comet in the asteroid belt. Along with the Jet Propulsion Laboratory and the ESA, they have picked up encrypted signals from outside the solar system. Bob Franklin, now dead, is personality-reconstructed in the Franklin Collective. Manfred, his memories recovered, moves to further expand the rights of non-human intelligences while Aineko begins to study and decode the alien signals.

Part II, Point of Inflection, opens a decade later in the early/mid-21st century and centers on Amber Macx, now a teen-ager, in the outer Solar System. The first story, entitled “Halo“, centers around Amber’s plot (with Annette and Manfred’s help) to break free from her domineering mother by enslaving herself via s Yemeni shell corporation and enlisting aboard a Franklin-Collective owned spacecraft that is mining materials from Amalthea, Jupiter’s fourth moon.

Part II, Point of Inflection, opens a decade later in the early/mid-21st century and centers on Amber Macx, now a teen-ager, in the outer Solar System. The first story, entitled “Halo“, centers around Amber’s plot (with Annette and Manfred’s help) to break free from her domineering mother by enslaving herself via s Yemeni shell corporation and enlisting aboard a Franklin-Collective owned spacecraft that is mining materials from Amalthea, Jupiter’s fourth moon.

To retain control of her daughter, Pamela petitions an imam named Sadeq to travel to Amalthea to issue an Islamic legal judgment against Amber. Amber manages to thwart this by setting up her own empire on a small, privately owned asteroid, thus making herself sovereign over an actual state. In the meantime, the alien signals have been decoded, and a physical journey to an alien “router” beyond the Solar System is planned.

In the second story “Router“, the uploaded personalities of Amber and 62 of her peers travel to a brown dwarf star named Hyundai +4904/-56 to find the alien router. Traveling aboard the Field Circus, a tiny spacecraft made of computronium and propelled by a Jupiter-based laser and a lightsail, the virtualized crew are contacted by aliens.

Known as “The Wunch”, these sentients occupy virtual bodies based on Lobster patterns that were “borrowed” from Manfred’s original transmissions. After opening up negotiations for technology, Amber and her friends realize the Wunch are just a group of thieving, third-rate “barbarians” who have taken over in the wake of another species transcending thanks to a technological singularity. After thwarting The Wunch, Amber and a few others make the decision to travel deep into the router’s wormhole network.

In the third story, “Nightfall“, the router explorers find themselves trapped by yet more malign aliens in a variety of virtual spaces. In time, they realize the virtual reaities are being hosted by a Matrioshka brain – a megastructure built around a star (similar to a Dyson’s Sphere) composed of computronium. The builders of this brain seem to have disappeared (or been destroyed by their own creations), leaving an anarchy ruled by sentient, viral corporations and scavengers who attempt to use newcomers as currency.

With Aineko’s help, the crew finally escapes by offering passage to a “rogue alien corporation” (a “pyramid scheme crossed with a 419 scam”), represented by a giant virtual slug. This alien personality opens a powered route out, and the crew begins the journey back home after many decades of being away.

Part III, Singularity, things take place back in the Solar System from the point of view of Sirhan – the son of the physical Amber and Sadeq who stayed behind. In “Curator“, the crew of the Field Circus comes home to find that the inner planets of the Solar System have been disassembled to build a Matrioshka brain similar to the one they encountered through the router. They arrive at Saturn, which is where normal humans now reside, and come to a floating habitat in Saturn’s upper atmosphere being run by Sirhan.

Part III, Singularity, things take place back in the Solar System from the point of view of Sirhan – the son of the physical Amber and Sadeq who stayed behind. In “Curator“, the crew of the Field Circus comes home to find that the inner planets of the Solar System have been disassembled to build a Matrioshka brain similar to the one they encountered through the router. They arrive at Saturn, which is where normal humans now reside, and come to a floating habitat in Saturn’s upper atmosphere being run by Sirhan.

The crew upload their virtual states into new bodies, and find that they are all now bankrupt and unable to compete with the new Economics 2.0 model practised by the posthuman intelligences of the inner system. Manfred, Pamela, and Annette are present in various forms and realize Sirhan has summoned them all to this place. Meanwhile, Bailiffs—sentient enforcement constructs—arrive to “repossess” Amber and Aineko, but a scheme is hatched whereby the Slug is introduced to Economics 2.0, which keeps both constructs very busy.

In “Elector“, we see Amber, Annette, Manfred and Gianna (Manfred’s old political colleague) in the increasingly-populated Saturnian floating cities and working on a political campaign to finance a scheme to escape the predations of the “Vile Offspring” – the sentient minds that inhabit the inner Solar System’s Matrioshka brain. With Amber in charge of this “Accelerationista” party, they plan to journey once more to the router network. She loses the election to the stay-at-home “conservationista” faction, but once more the Lobsters step in to help by offering passage to uploads on their large ships if the humans agree to act as explorers and mappers.

In the third and final chapter, “Survivor“, things fast-forward to a few centuries after the singularity. The router has once again been reached by the human ship and humanity now lives in space habitats throughout the Galaxy. While some continue in the ongoing exploration of space, others (copies of various people) live in habitats around Hyundai and other stars, raising children and keeping all past versions of themselves and others archived.

Meanwhile, Manfred and Annette reconcile their differences and realize they were being manipulated all along. Aineko, who was becoming increasingly intelligent throughout the decades, was apparently pushing Manfred to fulfill his schemes to help bring the humanity to the alien node and help humanity escape the fate of other civilizations that were consumed by their own technological progress.

Summary:

Needless to say, this book was one big tome of big ideas, and could be mind-bendingly weird and inaccessible at times! I’m thankful I came to it when I did, because no one should attempt to read this until they’ve had sufficient priming by studying all the key concepts involved. For instance, don’t even think about touching this book unless you’re familiar with the notion of the Technological Singularity. Beyond that, be sure to familiarize yourself with things like utility fogs, Dyson Spheres, computronium, nanotechnology, and the basics of space travel.

You know what, let’s just say you shouldn’t be allowed to read this book until you’ve first tackled writers like Ray Kurzweil, William Gibson, Arthur C. Clarke, Alastair Reynolds and Neal Stephenson. Maybe Vernon Vinge too, who I’m currently working on. But assuming you can wrap your mind around the things presented therein, you will feel like you’ve digested something pretty elephantine and which is still pretty cutting edge a decade or more years after it was first published!

But to break it all down, the story is essentially a sort of cautionary tale of the dangers of the ever-increasing pace of change and advancement. At several points in the story, the drive toward extropianism and post-humanity is held up as both an inevitability and a fearful prospect. It’s also presented as a possible explanation for the Fermi Paradox – which states that if sentient life is statistically likely and plentiful in our universe, why has humanity not observed or encountered it?

According to Stross, it is because sentient species – which would all presumably have the capacity for technological advancement – will eventually be consumed by the explosion caused by ever-accelerating progress. This will inevitably lead to a situation where all matter can be converted into computing space, all thought and existence can be uploaded, and species will not want to venture away from their solar system because the bandwidth will be too weak. In a society built on computronium and endless time, instant communication and access will be tantamount to life itself.

All that being said, the inaccessibility can be tricky sometimes and can make the read feel like its a bit of a labor. And the twist at the ending did seem like it was a little contrived and out of left field. It certainly made sense in the context of the story, but to think that a robotic cat that was progressively getting smarter was the reason behind so much of the story’s dynamic – both in terms of the characters and the larger plot – seemed sudden and farfetched.

And in reality, the story was more about the technical aspects and deeper philosophical questions than anything about the characters themselves. As such, anyone who enjoys character-driven stories should probably stay away from it. But for people who enjoy plot-driven tales that are very dense and loaded with cool technical stuff (which describes me pretty well!), this is definitely a must-read.

Now if you will excuse me, I’m off to finish Vernor Vinge’s Rainbow’s End, another dense, sometimes inaccessible read!

Have you ever read a book that felt it came along at exactly the right time? Or one that spoke to you and your particular interests at the time? Well, this was one such book for me. Rather than being a single story, this book is actually a collection of shorts that Stross wrote during the early 2000s, but which were all connected by a common theme. Essentially, the six shorts tell the story of three generations of the Macx family, and take place before, during and after the Technological Singularity.

Have you ever read a book that felt it came along at exactly the right time? Or one that spoke to you and your particular interests at the time? Well, this was one such book for me. Rather than being a single story, this book is actually a collection of shorts that Stross wrote during the early 2000s, but which were all connected by a common theme. Essentially, the six shorts tell the story of three generations of the Macx family, and take place before, during and after the Technological Singularity.

This is the central focus of the story – and Stross’ particular obsession – which he explores in serious depth. The title, which in Italian means “speeding up” and is used as a tempo marking in musical notation, refers to the accelerating rate of technological progress and its impact on humanity. Beginning in the 21st century with the character of Manfred Mancx, a “venture altruist”; moving to his daughter Amber in the mid 21st century; the story culminates with Sirhan al-Khurasani, Amber’s son in the late 21st century and distant future.

This is the central focus of the story – and Stross’ particular obsession – which he explores in serious depth. The title, which in Italian means “speeding up” and is used as a tempo marking in musical notation, refers to the accelerating rate of technological progress and its impact on humanity. Beginning in the 21st century with the character of Manfred Mancx, a “venture altruist”; moving to his daughter Amber in the mid 21st century; the story culminates with Sirhan al-Khurasani, Amber’s son in the late 21st century and distant future. Part I, Slow Takeoff, begins with the short story “Lobsters“, which opens in early-21st century Amsterdam. Here, we see Manfred Macx, a “venture altruist”, going about his business, making business ideas happen for others and promoting development. In the course of things, Manfred receives a call on a courier-delivered phone from entities claiming to be a net-based AI working through a KGB website, seeking his help on how to defect.

Part I, Slow Takeoff, begins with the short story “Lobsters“, which opens in early-21st century Amsterdam. Here, we see Manfred Macx, a “venture altruist”, going about his business, making business ideas happen for others and promoting development. In the course of things, Manfred receives a call on a courier-delivered phone from entities claiming to be a net-based AI working through a KGB website, seeking his help on how to defect. Part II, Point of Inflection, opens a decade later in the early/mid-21st century and centers on Amber Macx, now a teen-ager, in the outer Solar System. The first story, entitled “Halo“, centers around Amber’s plot (with Annette and Manfred’s help) to break free from her domineering mother by enslaving herself via s Yemeni shell corporation and enlisting aboard a Franklin-Collective owned spacecraft that is mining materials from Amalthea, Jupiter’s fourth moon.

Part II, Point of Inflection, opens a decade later in the early/mid-21st century and centers on Amber Macx, now a teen-ager, in the outer Solar System. The first story, entitled “Halo“, centers around Amber’s plot (with Annette and Manfred’s help) to break free from her domineering mother by enslaving herself via s Yemeni shell corporation and enlisting aboard a Franklin-Collective owned spacecraft that is mining materials from Amalthea, Jupiter’s fourth moon. Part III, Singularity, things take place back in the Solar System from the point of view of Sirhan – the son of the physical Amber and Sadeq who stayed behind. In “Curator“, the crew of the Field Circus comes home to find that the inner planets of the Solar System have been disassembled to build a Matrioshka brain similar to the one they encountered through the router. They arrive at Saturn, which is where normal humans now reside, and come to a floating habitat in Saturn’s upper atmosphere being run by Sirhan.

Part III, Singularity, things take place back in the Solar System from the point of view of Sirhan – the son of the physical Amber and Sadeq who stayed behind. In “Curator“, the crew of the Field Circus comes home to find that the inner planets of the Solar System have been disassembled to build a Matrioshka brain similar to the one they encountered through the router. They arrive at Saturn, which is where normal humans now reside, and come to a floating habitat in Saturn’s upper atmosphere being run by Sirhan.