Could it be that the reason we are confronted by the “Great Silence” is that advanced civilizations are being wiped out? This is the essence of the Berserker Hypothesis, inspired by science fiction, but rooted in scientific theory. It combines the concept of von Neuman Probes, nanotechnology, and the idea that the greatest threat to advanced life is itself!

Tag: John von Neumann

The Fate of Humanity

Welcome to the world of tomorroooooow! Or more precisely, to many possible scenarios that humanity could face as it steps into the future. Perhaps it’s been all this talk of late about the future of humanity, how space exploration and colonization may be the only way to ensure our survival. Or it could be I’m just recalling what a friend of mine – Chris A. Jackson – wrote with his “Flash in the Pan” piece – a short that consequently inspired me to write the novel Source.

Welcome to the world of tomorroooooow! Or more precisely, to many possible scenarios that humanity could face as it steps into the future. Perhaps it’s been all this talk of late about the future of humanity, how space exploration and colonization may be the only way to ensure our survival. Or it could be I’m just recalling what a friend of mine – Chris A. Jackson – wrote with his “Flash in the Pan” piece – a short that consequently inspired me to write the novel Source.

Either way, I’ve been thinking about the likely future scenarios and thought I should include it alongside the Timeline of the Future. After all, once cannot predict the course of the future as much as predict possible outcomes and paths, and trust that the one they believe in the most will come true. So, borrowing from the same format Chris used, here are a few potential fates, listed from worst to best – or least to most advanced.

1. Humanrien:

Due to the runaway effects of Climate Change during the 21st/22nd centuries, the Earth is now a desolate shadow of its once-great self. Humanity is non-existent, as are many other species of mammals, avians, reptiles, and insects. And it is predicted that the process will continue into the foreseeable future, until such time as the atmosphere becomes a poisoned, sulfuric vapor and the ground nothing more than windswept ashes and molten metal.

Due to the runaway effects of Climate Change during the 21st/22nd centuries, the Earth is now a desolate shadow of its once-great self. Humanity is non-existent, as are many other species of mammals, avians, reptiles, and insects. And it is predicted that the process will continue into the foreseeable future, until such time as the atmosphere becomes a poisoned, sulfuric vapor and the ground nothing more than windswept ashes and molten metal.

One thing is clear though: the Earth will never recover, and humanity’s failure to seed other planets with life and maintain a sustainable existence on Earth has led to its extinction. The universe shrugs and carries on…

2. Post-Apocalyptic:

Whether it is due to nuclear war, a bio-engineered plague, or some kind of “nanocaust”, civilization as we know it has come to an end. All major cities lie in ruin and are populated only marauders and street gangs, the more peaceful-minded people having fled to the countryside long ago. In scattered locations along major rivers, coastlines, or within small pockets of land, tiny communities have formed and eke out an existence from the surrounding countryside.

Whether it is due to nuclear war, a bio-engineered plague, or some kind of “nanocaust”, civilization as we know it has come to an end. All major cities lie in ruin and are populated only marauders and street gangs, the more peaceful-minded people having fled to the countryside long ago. In scattered locations along major rivers, coastlines, or within small pockets of land, tiny communities have formed and eke out an existence from the surrounding countryside.

At this point, it is unclear if humanity will recover or remain at the level of a pre-industrial civilization forever. One thing seems clear, that humanity will not go extinct just yet. With so many pockets spread across the entire planet, no single fate could claim all of them anytime soon. At least, one can hope that it won’t.

3. Dog Days:

The world continues to endure recession as resource shortages, high food prices, and diminishing space for real estate continue to plague the global economy. Fuel prices remain high, and opposition to new drilling and oil and natural gas extraction are being blamed. Add to that the crushing burdens of displacement and flooding that is costing governments billions of dollars a year, and you have life as we know it.

The world continues to endure recession as resource shortages, high food prices, and diminishing space for real estate continue to plague the global economy. Fuel prices remain high, and opposition to new drilling and oil and natural gas extraction are being blamed. Add to that the crushing burdens of displacement and flooding that is costing governments billions of dollars a year, and you have life as we know it.

The smart money appears to be in offshore real-estate, where Lillypad cities and Arcologies are being built along the coastlines of the world. Already, habitats have been built in Boston, New York, New Orleans, Tokyo, Shanghai, Hong Kong and the south of France, and more are expected in the coming years. These are the most promising solution of what to do about the constant flooding and damage being caused by rising tides and increased coastal storms.

In these largely self-contained cities, those who can afford space intend to wait out the worst. It is expected that by the mid-point of the 22nd century, virtually all major ocean-front cities will be abandoned and those that sit on major waterways will be protected by huge levies. Farmland will also be virtually non-existent except within the Polar Belts, which means the people living in the most populous regions of the world will either have to migrate or die.

No one knows how the world’s 9 billion will endure in that time, but for the roughly 100 million living at sea, it’s not a going concern.

4. Technological Plateau:

Computers have reached a threshold of speed and processing power. Despite the discovery of graphene, the use of optical components, and the development of quantum computing/internet principles, it now seems that machines are as smart as they will ever be. That is to say, they are only slightly more intelligent than humans, and still can’t seem to beat the Turing Test with any consistency.

Computers have reached a threshold of speed and processing power. Despite the discovery of graphene, the use of optical components, and the development of quantum computing/internet principles, it now seems that machines are as smart as they will ever be. That is to say, they are only slightly more intelligent than humans, and still can’t seem to beat the Turing Test with any consistency.

It seems the long awaited-for explosion in learning and intelligence predicted by Von Neumann, Kurzweil and Vinge seems to have fallen flat. That being said, life is getting better. With all the advances turned towards finding solutions to humanity’s problems, alternative energy, medicine, cybernetics and space exploration are still growing apace; just not as fast or awesomely as people in the previous century had hoped.

Missions to Mars have been mounted, but a colony on that world is still a long ways away. A settlement on the Moon has been built, but mainly to monitor the research and solar energy concerns that exist there. And the problem of global food shortages and CO2 emissions is steadily declining. It seems that the words “sane planning, sensible tomorrow” have come to characterize humanity’s existence. Which is good… not great, but good.

Humanity’s greatest expectations may have yielded some disappointment, but everyone agrees that things could have been a hell of a lot worse!

5. The Green Revolution:

The global population has reached 10 billion. But the good news is, its been that way for several decades. Thanks to smart housing, hydroponics and urban farms, hunger and malnutrition have been eliminated. The needs of the Earth’s people are also being met by a combination of wind, solar, tidal, geothermal and fusion power. And though space is not exactly at a premium, there is little want for housing anymore.

The global population has reached 10 billion. But the good news is, its been that way for several decades. Thanks to smart housing, hydroponics and urban farms, hunger and malnutrition have been eliminated. The needs of the Earth’s people are also being met by a combination of wind, solar, tidal, geothermal and fusion power. And though space is not exactly at a premium, there is little want for housing anymore.

Additive manufacturing, biomanufacturing and nanomanufacturing have all led to an explosion in how public spaces are built and administered. Though it has led to the elimination of human construction and skilled labor, the process is much safer, cleaner, efficient, and has ensured that anything built within the past half-century is harmonious with the surrounding environment.

This explosion is geological engineering is due in part to settlement efforts on Mars and the terraforming of Venus. Building a liveable environment on one and transforming the acidic atmosphere on the other have helped humanity to test key technologies and processes used to end global warming and rehabilitate the seas and soil here on Earth. Over 100,000 people now call themselves “Martian”, and an additional 10,000 Venusians are expected before long.

Colonization is an especially attractive prospect for those who feel that Earth is too crowded, too conservative, and lacking in personal space…

6. Intrepid Explorers:

Humanity has successfully colonized Mars, Venus, and is busy settling the many moons of the outer Solar System. Current population statistics indicate that over 50 billion people now live on a dozen worlds, and many are feeling the itch for adventure. With deep-space exploration now practical, thanks to the development of the Alcubierre Warp Drive, many missions have been mounted to explore and colonizing neighboring star systems.

Humanity has successfully colonized Mars, Venus, and is busy settling the many moons of the outer Solar System. Current population statistics indicate that over 50 billion people now live on a dozen worlds, and many are feeling the itch for adventure. With deep-space exploration now practical, thanks to the development of the Alcubierre Warp Drive, many missions have been mounted to explore and colonizing neighboring star systems.

These include Earth’s immediate neighbor, Alpha Centauri, but also the viable star systems of Tau Ceti, Kapteyn, Gliese 581, Kepler 62, HD 85512, and many more. With so many Earth-like, potentially habitable planets in the near-universe and now within our reach, nothing seems to stand between us and the dream of an interstellar human race. Mission to find extra-terrestrial intelligence are even being plotted.

This is one prospect humanity both anticipates and fears. While it is clear that no sentient life exists within the local group of star systems, our exploration of the cosmos has just begun. And if our ongoing scientific surveys have proven anything, it is that the conditions for life exist within many star systems and on many worlds. No telling when we might find one that has produced life of comparable complexity to our own, but time will tell.

One can only imagine what they will look like. One can only imagine if they are more or less advanced than us. And most importantly, one can only hope that they will be friendly…

7. Post-Humanity:

Cybernetics, biotechnology, and nanotechnology have led to an era of enhancement where virtually every human being has evolved beyond its biological limitations. Advanced medicine, digital sentience and cryonics have prolonged life indefinitely, and when someone is facing death, they can preserve their neural patterns or their brain for all time by simply uploading or placing it into stasis.

Cybernetics, biotechnology, and nanotechnology have led to an era of enhancement where virtually every human being has evolved beyond its biological limitations. Advanced medicine, digital sentience and cryonics have prolonged life indefinitely, and when someone is facing death, they can preserve their neural patterns or their brain for all time by simply uploading or placing it into stasis.

Both of these options have made deep-space exploration a reality. Preserved human beings launch themselves towards expoplanets, while the neural uploads of explorers spend decades or even centuries traveling between solar systems aboard tiny spaceships. Space penetrators are fired in all directions to telexplore the most distant worlds, with the information being beamed back to Earth via quantum communications.

It is an age of posts – post-scarcity, post-mortality, and post-humansim. Despite the existence of two billion organics who have minimal enhancement, there appears to be no stopping the trend. And with the breakneck pace at which life moves around them, it is expected that the unenhanced – “organics” as they are often known – will migrate outward to Europa, Ganymede, Titan, Oberon, and the many space habitats that dot the outer Solar System.

Presumably, they will mount their own space exploration in the coming decades to find new homes abroad in interstellar space, where their kind can expect not to be swept aside by the unstoppable tide of progress.

8. Star Children:

Earth is no more. The Sun is now a mottled, of its old self. Surrounding by many layers of computronium, our parent star has gone from being the source of all light and energy in our solar system to the energy source that powers the giant Dyson Swarm at the center of our universe. Within this giant Matrioshka Brain, trillions of human minds live out an existence as quantum-state neural patterns, living indefinitely in simulated realities.

Earth is no more. The Sun is now a mottled, of its old self. Surrounding by many layers of computronium, our parent star has gone from being the source of all light and energy in our solar system to the energy source that powers the giant Dyson Swarm at the center of our universe. Within this giant Matrioshka Brain, trillions of human minds live out an existence as quantum-state neural patterns, living indefinitely in simulated realities.

Within the outer Solar System and beyond lie billions more, enhanced trans and post-humans who have opted for an “Earthly” existence amongst the planets and stars. However, life seems somewhat limited out in those parts, very rustic compared to the infinite bandwidth and computational power of inner Solar System. And with this strange dichotomy upon them, the human race suspects that it might have solved the Fermi Paradox.

If other sentient life can be expected to have followed a similar pattern of technological development as the human race, then surely they too have evolved to the point where the majority of their species lives in Dyson Swarms around their parent Sun. Venturing beyond holds little appeal, as it means moving away from the source of bandwidth and becoming isolated. Hopefully, enough of them are adventurous enough to meet humanity partway…

_____

Which will come true? Who’s to say? Whether its apocalyptic destruction or runaway technological evolution, cataclysmic change is expected and could very well threaten our existence. Personally, I’m hoping for something in the scenario 5 and/or 6 range. It would be nice to know that both humanity and the world it originated from will survive the coming centuries!

The Future of Computing: Brain-Like Computers

It’s no secret that computer scientists and engineers are looking to the human brain as means of achieving the next great leap in computer evolution. Already, machines are being developed that rely on machine blood, can continue working despite being damaged, and recognize images and speech. And soon, a computer chip that is capable of learning from its mistakes will also be available.

It’s no secret that computer scientists and engineers are looking to the human brain as means of achieving the next great leap in computer evolution. Already, machines are being developed that rely on machine blood, can continue working despite being damaged, and recognize images and speech. And soon, a computer chip that is capable of learning from its mistakes will also be available.

The new computing approach, already in use by some large technology companies, is based on the biological nervous system – specifically on how neurons react to stimuli and connect with other neurons to interpret information. It allows computers to absorb new information while carrying out a task, and adjust what they do based on the changing signals.

The first commercial version of the new kind of computer chip is scheduled to be released in 2014, and was the result of a collaborative effort between I.B.M. and Qualcomm, as well as a Stanford research team. This “neuromorphic processor” can not only automate tasks that once required painstaking programming, but can also sidestep and even tolerate errors, potentially making the term “computer crash” obsolete.

The first commercial version of the new kind of computer chip is scheduled to be released in 2014, and was the result of a collaborative effort between I.B.M. and Qualcomm, as well as a Stanford research team. This “neuromorphic processor” can not only automate tasks that once required painstaking programming, but can also sidestep and even tolerate errors, potentially making the term “computer crash” obsolete.

In coming years, the approach will make possible a new generation of artificial intelligence systems that will perform some functions that humans do with ease: see, speak, listen, navigate, manipulate and control. That can hold enormous consequences for tasks like facial and speech recognition, navigation and planning, which are still in elementary stages and rely heavily on human programming.

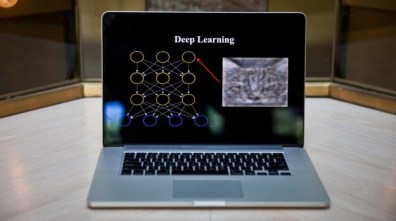

For example, computer vision systems only “recognize” objects that can be identified by the statistics-oriented algorithms programmed into them. An algorithm is like a recipe, a set of step-by-step instructions to perform a calculation. But last year, Google researchers were able to get a machine-learning algorithm, known as a “Google Neural Network”, to perform an identification task (involving cats) without supervision.

For example, computer vision systems only “recognize” objects that can be identified by the statistics-oriented algorithms programmed into them. An algorithm is like a recipe, a set of step-by-step instructions to perform a calculation. But last year, Google researchers were able to get a machine-learning algorithm, known as a “Google Neural Network”, to perform an identification task (involving cats) without supervision.

And this past June, the company said it had used those neural network techniques to develop a new search service to help customers find specific photos more accurately. And this past November, researchers at Standford University came up with a new algorithm that could give computers the power to more reliably interpret language. It’s known as the Neural Analysis of Sentiment (NaSent).

A similar concept known as Deep Leaning is also looking to endow software with a measure of common sense. Google is using this technique with their voice recognition technology to aid in performing searches. In addition, the social media giant Facebook is looking to use deep learning to help them improve Graph Search, an engine that allows users to search activity on their network.

A similar concept known as Deep Leaning is also looking to endow software with a measure of common sense. Google is using this technique with their voice recognition technology to aid in performing searches. In addition, the social media giant Facebook is looking to use deep learning to help them improve Graph Search, an engine that allows users to search activity on their network.

Until now, the design of computers was dictated by ideas originated by the mathematician John von Neumann about 65 years ago. Microprocessors perform operations at lightning speed, following instructions programmed using long strings of binary code (0s and 1s). The information is stored separately in what is known as memory, either in the processor itself, in adjacent storage chips or in higher capacity magnetic disk drives.

By contrast, the new processors consist of electronic components that can be connected by wires that mimic biological synapses. Because they are based on large groups of neuron-like elements, they are known as neuromorphic processors, a term credited to the California Institute of Technology physicist Carver Mead, who pioneered the concept in the late 1980s.

By contrast, the new processors consist of electronic components that can be connected by wires that mimic biological synapses. Because they are based on large groups of neuron-like elements, they are known as neuromorphic processors, a term credited to the California Institute of Technology physicist Carver Mead, who pioneered the concept in the late 1980s.

These processors are not “programmed”, in the conventional sense. Instead, the connections between the circuits are “weighted” according to correlations in data that the processor has already “learned.” Those weights are then altered as data flows in to the chip, causing them to change their values and to “spike.” This, in turn, strengthens some connections and weakens others, reacting much the same way the human brain does.

In the words of Dharmendra Modha, an I.B.M. computer scientist who leads the company’s cognitive computing research effort:

In the words of Dharmendra Modha, an I.B.M. computer scientist who leads the company’s cognitive computing research effort:

Instead of bringing data to computation as we do today, we can now bring computation to data. Sensors become the computer, and it opens up a new way to use computer chips that can be everywhere.

One great advantage of the new approach is its ability to tolerate glitches, whereas traditional computers are cannot work around the failure of even a single transistor. With the biological designs, the algorithms are ever changing, allowing the system to continuously adapt and work around failures to complete tasks. Another benefit is energy efficiency, another inspiration drawn from the human brain.

The new computers, which are still based on silicon chips, will not replace today’s computers, but augment them; at least for the foreseeable future. Many computer designers see them as coprocessors, meaning they can work in tandem with other circuits that can be embedded in smartphones and the centralized computers that run computing clouds.

The new computers, which are still based on silicon chips, will not replace today’s computers, but augment them; at least for the foreseeable future. Many computer designers see them as coprocessors, meaning they can work in tandem with other circuits that can be embedded in smartphones and the centralized computers that run computing clouds.

However, the new approach is still limited, thanks to the fact that scientists still do not fully understand how the human brain functions. As Kwabena Boahen, a computer scientist who leads Stanford’s Brains in Silicon research program, put it:

We have no clue. I’m an engineer, and I build things. There are these highfalutin theories, but give me one that will let me build something.

Luckily, there are efforts underway that are designed to remedy this, with the specific intention of directing that knowledge towards the creation of better computers and AIs. One such effort comes from the National Science Foundation financed the Center for Brains, Minds and Machines, a new research center based at the Massachusetts Institute of Technology, with Harvard and Cornell.

Luckily, there are efforts underway that are designed to remedy this, with the specific intention of directing that knowledge towards the creation of better computers and AIs. One such effort comes from the National Science Foundation financed the Center for Brains, Minds and Machines, a new research center based at the Massachusetts Institute of Technology, with Harvard and Cornell.

Another is the California Institute for Telecommunications and Information Technology (aka. Calit2) – a center dedicated to innovation in nanotechnology, life sciences, information technology, and telecommunications. As

Larry Smarr, an astrophysicist and director of Institute, put it:

We’re moving from engineering computing systems to something that has many of the characteristics of biological computing.

And last, but certainly not least, is the Human Brain Project, an international group of 200 scientists from 80 different research institutions and based in Lausanne, Switzerland. Having secured the $1.6 billion they need to fund their efforts, these researchers will spend the next ten years conducting research that cuts across multiple disciplines.

And last, but certainly not least, is the Human Brain Project, an international group of 200 scientists from 80 different research institutions and based in Lausanne, Switzerland. Having secured the $1.6 billion they need to fund their efforts, these researchers will spend the next ten years conducting research that cuts across multiple disciplines.

This initiative, which has been compared to the Large Hadron Collider, will attempt to reconstruct the human brain piece-by-piece and gradually bring these cognitive components into an overarching supercomputer. The expected result of this research will be new platforms for “neuromorphic computing” and “neurorobotics,” allowing for the creation of computing and robotic architectures that mimic the functions of the human brain.

When future generations look back on this decade, no doubt they will refer to it as the birth of the neuromophic computing revolution. Or maybe just Neuromorphic Revolution for short, but that sort of depends on the outcome. With so many technological revolutions well underway, it is difficult to imagine how the future will look back and characterize this time.

When future generations look back on this decade, no doubt they will refer to it as the birth of the neuromophic computing revolution. Or maybe just Neuromorphic Revolution for short, but that sort of depends on the outcome. With so many technological revolutions well underway, it is difficult to imagine how the future will look back and characterize this time.

Perhaps, as Charles Stross suggest, it will simply be known as “the teens”, that time in pre-Singularity history where it was all starting to come together, but was yet to explode and violently change everything we know. I for one am looking forward to being around to witness it all!

Sources: nytimes.com, technologyreview.com, calit2.net, humanbrainproject.eu

The Singularity: The End of Sci-Fi?

The coming Singularity… the threshold where we will essentially surpass all our current restrictions and embark on an uncertain future. For many, its something to be feared, while for others, its something regularly fantasized about. On the one hand, it could mean a future where things like shortages, scarcity, disease, hunger and even death are obsolete. But on the other, it could also mean the end of humanity as we know it.

The coming Singularity… the threshold where we will essentially surpass all our current restrictions and embark on an uncertain future. For many, its something to be feared, while for others, its something regularly fantasized about. On the one hand, it could mean a future where things like shortages, scarcity, disease, hunger and even death are obsolete. But on the other, it could also mean the end of humanity as we know it.

As a friend of mine recently said, in reference to some of the recent technological breakthroughs: “Cell phones, prosthetics, artificial tissue…you sci-fi writers are going to run out of things to write about soon.” I had to admit he had a point. If and when he reach an age where all scientific breakthroughs that were once the province of speculative writing exist, what will be left to speculate about?

To break it down, simply because I love to do so whenever possible, the concept borrows from the field of quantum physics, where the edge of black hole is described as a “quantum singularity”. It is at this point that all known physical laws, including time and space themselves, coalesce and become a state of oneness, turning all matter and energy into some kind of quantum soup. Nothing beyond this veil (also known as an Event Horizon) can be seen, for no means exist to detect anything.

To break it down, simply because I love to do so whenever possible, the concept borrows from the field of quantum physics, where the edge of black hole is described as a “quantum singularity”. It is at this point that all known physical laws, including time and space themselves, coalesce and become a state of oneness, turning all matter and energy into some kind of quantum soup. Nothing beyond this veil (also known as an Event Horizon) can be seen, for no means exist to detect anything.

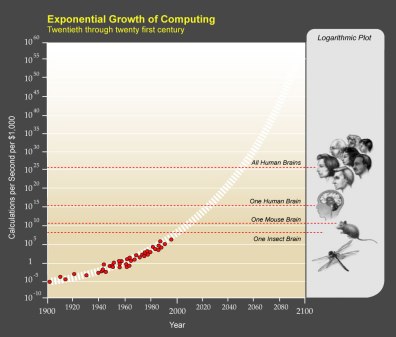

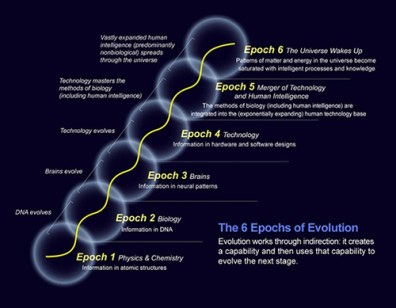

The same principle holds true in this case, at least that’s the theory. Originally coined by mathematician John von Neumann in the mid-1950’s, the term served as a description for a phenomenon of technological acceleration causing an eventual unpredictable outcome in society. In describing it, he spoke of the “ever accelerating progress of technology and changes in the mode of human life, which gives the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, could not continue.”

The term was then popularized by science fiction writer Vernor Vinge (A Fire Upon the Deep, A Deepness in the Sky, Rainbows End) who argued that artificial intelligence, human biological enhancement, or brain-computer interfaces could be possible causes of the singularity. In more recent times, the same theme has been picked up by futurist Ray Kurzweil, the man who points to the accelerating rate of change throughout history, with special emphasis on the latter half of the 20th century.

The term was then popularized by science fiction writer Vernor Vinge (A Fire Upon the Deep, A Deepness in the Sky, Rainbows End) who argued that artificial intelligence, human biological enhancement, or brain-computer interfaces could be possible causes of the singularity. In more recent times, the same theme has been picked up by futurist Ray Kurzweil, the man who points to the accelerating rate of change throughout history, with special emphasis on the latter half of the 20th century.

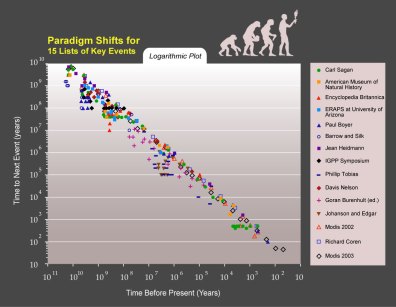

In what Kurzweil described as the “Law of Accelerating Returns”, every major technological breakthrough was preceded by a period of exponential growth. In his writings, he claimed that whenever technology approaches a barrier, new technologies come along to surmount it. He also predicted paradigm shifts will become increasingly common, leading to “technological change so rapid and profound it represents a rupture in the fabric of human history”.

Looking into the deep past, one can see indications of what Kurzweil and others mean. Beginning in the Paleolithic Era, some 70,000 years ago, humanity began to spread out a small pocket in Africa and adopt the conventions we now associate with modern Homo sapiens – including language, music, tools, myths and rituals.

Looking into the deep past, one can see indications of what Kurzweil and others mean. Beginning in the Paleolithic Era, some 70,000 years ago, humanity began to spread out a small pocket in Africa and adopt the conventions we now associate with modern Homo sapiens – including language, music, tools, myths and rituals.

By the time of the “Paleolithic Revolution” – circa 50,000 – 40,000 years ago – we had spread to all corners of the Old World world and left evidence of continuous habitation through tools, cave paintings and burials. In addition, all other existing forms of hominids – such as Homo neanderthalensis and Denisovans – became extinct around the same time, leading many anthropologists to wonder if the presence of homo sapiens wasn’t the deciding factor in their disappearance.

And then came another revolution, this one known as the “Neolithic” which occurred roughly 12,000 years ago. By this time, humanity had hunted countless species to extinction, had spread to the New World, and began turning to agriculture to maintain their current population levels. Thanks to the cultivation of grains and the domestication of animals, civilization emerged in three parts of the world – the Fertile Crescent, China and the Andes – independently and simultaneously.

And then came another revolution, this one known as the “Neolithic” which occurred roughly 12,000 years ago. By this time, humanity had hunted countless species to extinction, had spread to the New World, and began turning to agriculture to maintain their current population levels. Thanks to the cultivation of grains and the domestication of animals, civilization emerged in three parts of the world – the Fertile Crescent, China and the Andes – independently and simultaneously.

All of this gave rise to more habits we take for granted in our modern world, namely written language, metal working, philosophy, astronomy, fine art, architecture, science, mining, slavery, conquest and warfare. Empires that spanned entire continents rose, epics were written, inventions and ideas forged that have stood the test of time. Henceforth, humanity would continue to grow, albeit with some minor setbacks along the way.

And then by the 1500s, something truly immense happened. The hemispheres collided as Europeans, first in small droves, but then en masse, began to cross the ocean and made it home to tell others what they found. What followed was an unprecedented period of expansion, conquest, genocide and slavery. But out of that, a global age was also born, with empires and trade networks spanning the entire planet.

And then by the 1500s, something truly immense happened. The hemispheres collided as Europeans, first in small droves, but then en masse, began to cross the ocean and made it home to tell others what they found. What followed was an unprecedented period of expansion, conquest, genocide and slavery. But out of that, a global age was also born, with empires and trade networks spanning the entire planet.

Hold onto your hats, because this is where things really start to pick up. Thanks to the collision of hemispheres, all the corn, tomatoes, avocados, beans, potatoes, gold, silver, chocolate, and vanilla led to a period of unprecedented growth in Europe, leading to the Renaissance, Scientific Revolution, and the Enlightenment. And of course, these revolutions in thought and culture were followed by political revolutions shortly thereafter.

By the 1700’s, another revolution began, this one involving industry and creation of a capitalist economy. Much like the two that preceded it, it was to have a profound and permanent effect on human history. Coal and steam technology gave rise to modern transportation, cities grew, international travel became as extensive as international trade, and every aspect of society became “rationalized”.

By the 1700’s, another revolution began, this one involving industry and creation of a capitalist economy. Much like the two that preceded it, it was to have a profound and permanent effect on human history. Coal and steam technology gave rise to modern transportation, cities grew, international travel became as extensive as international trade, and every aspect of society became “rationalized”.

By the 20th century, the size and shape of the future really began to take shape, and many were scared. Humanity, that once tiny speck of organic matter in Africa, now covered the entire Earth and numbered over one and a half billion. And as the century rolled on, the unprecedented growth continued to accelerate. Within 100 years, humanity went from coal and diesel fuel to electrical power and nuclear reactors. We went from crossing the sea in steam ships to going to the moon in rockets.

And then, by the end of the 20th century, humanity once again experienced a revolution in the form of digital technology. By the time the “Information Revolution” had arrived, humanity had reached 6 billion people, was building hand held devices that were faster than computers that once occupied entire rooms, and exchanging more information in a single day than most peoples did in an entire century.

And then, by the end of the 20th century, humanity once again experienced a revolution in the form of digital technology. By the time the “Information Revolution” had arrived, humanity had reached 6 billion people, was building hand held devices that were faster than computers that once occupied entire rooms, and exchanging more information in a single day than most peoples did in an entire century.

And now, we’ve reached an age where all the things we once fantasized about – colonizing the Solar System and beyond, telepathy, implants, nanomachines, quantum computing, cybernetics, artificial intelligence, and bionics – seem to be becoming more true every day. As such, futurists predictions, like how humans will one day merge their intelligence with machines or live forever in bionic bodies, don’t seem so farfetched. If anything, they seem kind of scary!

There’s no telling where it will go, and it seems like even the near future has become completely unpredictable. The Singularity looms! So really, if the future has become so opaque that accurate predictions are pretty much impossible to make, why bother? What’s more, will predictions become true as the writer is writing about them? Won’t that remove all incentive to write about it?

There’s no telling where it will go, and it seems like even the near future has become completely unpredictable. The Singularity looms! So really, if the future has become so opaque that accurate predictions are pretty much impossible to make, why bother? What’s more, will predictions become true as the writer is writing about them? Won’t that remove all incentive to write about it?

And really, if the future is to become so unbelievably weird and/or awesome that fact will take the place of fiction, will fantasy become effectively obsolete? Perhaps. So again, why bother? Well, I can think one reason. Because its fun! And because as long as I can, I will continue to! I can’t predict what course the future will take, but knowing that its uncertain and impending makes it extremely cool to think about. And since I’m never happy keeping my thoughts to myself, I shall try to write about it!

So here’s to the future! It’s always there, like the horizon. No one can tell what it will bring, but we do know that it will always be there. So let’s embrace it and enter into it together! We knew what we in for the moment we first woke up and embraced this thing known as humanity.

And for a lovely and detailed breakdown of the Singularity, as well as when and how it will come in the future, go to futuretimeline.net. And be prepared for a little light reading 😉

Big News in Quantum Science!

Welcome all to my 800th post! Woot woot! I couldn’t possibly think of anything to special to write about to mark the occasion, as I seem to acknowledge far too many of these occasions. So instead I thought I’d wait for a much bigger milestone which is on the way and simply do a regular article. Hope you enjoy it, it is the 800th one I’ve written 😉

* * *

2012 saw quite a few technical developments and firsts being made; so many in fact that I had to dedicate two full posts to them! However, one story which didn’t make many news cycles, but may prove to be no less significant, was the advances made in the field of quantum science. In fact, the strides made in this field during the past year were the first indication that a global, quantum internet might actually be possible.

2012 saw quite a few technical developments and firsts being made; so many in fact that I had to dedicate two full posts to them! However, one story which didn’t make many news cycles, but may prove to be no less significant, was the advances made in the field of quantum science. In fact, the strides made in this field during the past year were the first indication that a global, quantum internet might actually be possible.

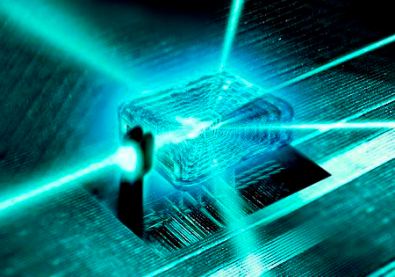

For some time now, scientists and researchers have been toying with the concept of machinery that relies on quantum mechanics. Basically, the idea revolves around “quantum teleportation”, a process where quantum states of matter, rather than matter itself, are beamed from one location to another. Currently, this involves using a high-powered laser to fire entangled photons from one location to the next. When the photons at the receiving end take on the properties of the photon sent, a quantum teleportation has occurred, a process which is faster than the speed of light since matter is not actually moving, only its properties.

Two years ago, scientists set the record for the longest teleportation by beaming a photon some 16 km. However, last year, a team of international researchers was able to beam the properties of a photon from their lab in La Palma to another lab in Tenerife, some 143 km away. Not only was this a new record, it was significant because 143 km happens to be just far enough to reach low Earth orbit satellites, thus proving that a world-spanning quantum network could be built.

Two years ago, scientists set the record for the longest teleportation by beaming a photon some 16 km. However, last year, a team of international researchers was able to beam the properties of a photon from their lab in La Palma to another lab in Tenerife, some 143 km away. Not only was this a new record, it was significant because 143 km happens to be just far enough to reach low Earth orbit satellites, thus proving that a world-spanning quantum network could be built.

Shortly thereafter, China struck back with its own advance, conducting the first teleportation of quantum states between two rubidium atoms. Naturally, atoms are several orders larger than a quantum qubit, which qualifies them as “macroscopic objects” – i.e. visible to the naked eye. This in turn has led many to believe that large quantities of information could be teleported from one location to the next using this technique in the near future.

And then came another breakthrough from England, where researchers managed to transmit qubits and binary data down the same piece of optic fiber, which laid the groundwork for a conventional internet that runs via optic cable instead of satellites, and which could be protected using quantum cryptography, a secured means of information transfer which remains (in theory) unbreakable.

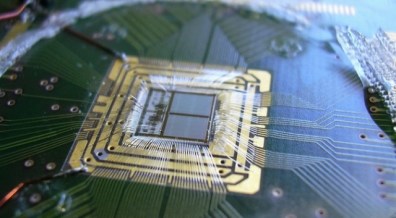

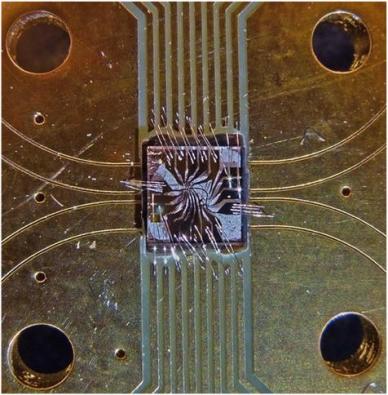

And finally, the companies of IBM and the University of Southern California (USC) reported big advances in the field of quantum computing during 2012. The year began with IBM announcing that it had created a 3-qubit computer chip (video below) capable of performing controlled logic functions. USC could only manage a 2-qubit chip — but it was fashioned out of diamond (pictured at left). Both advances strongly point to a future where your PC could be either completely quantum-based, or where you have a few quantum chips to aid with specific tasks.

And finally, the companies of IBM and the University of Southern California (USC) reported big advances in the field of quantum computing during 2012. The year began with IBM announcing that it had created a 3-qubit computer chip (video below) capable of performing controlled logic functions. USC could only manage a 2-qubit chip — but it was fashioned out of diamond (pictured at left). Both advances strongly point to a future where your PC could be either completely quantum-based, or where you have a few quantum chips to aid with specific tasks.

As it stands, quantum computing, networking, and cryptography remain in the research and development phase. IBM’s current estimates place the completion of a fully-working quantum computer at roughly ten to fifteen years away. And as it stands, the machinery needed to conduct any of these processes remains large, bulky and very expensive. But miniaturization and a drop in prices are too things you can always count on in the tech world!

So really, we may be looking at a worldwide, quantum internet by 2025 or 2030. We’re talking about a world in which information transfers faster than the speed of light, all connections are secure, and computing happens at unheard of speeds. Sounds impressive, but the real effect of this “quantum revolution” will be the exponential rate at which progress increases. With worldwide information sharing and computing happening so much faster, we can expect further advances in every field to take less time, and breakthroughs happening on a regular basis.

So really, we may be looking at a worldwide, quantum internet by 2025 or 2030. We’re talking about a world in which information transfers faster than the speed of light, all connections are secure, and computing happens at unheard of speeds. Sounds impressive, but the real effect of this “quantum revolution” will be the exponential rate at which progress increases. With worldwide information sharing and computing happening so much faster, we can expect further advances in every field to take less time, and breakthroughs happening on a regular basis.

Yes, this technology could very well be the harbinger of what John von Neumann called the “Technological Singularity”. I know some of you might be feeling nervous at the moment, but somewhere, Ray Kurzweil is doing a happy dance! Just a few more decades before he and others like him can start downloading their brains or getting those long-awaited cybernetic enhancements!

Source: extremetech.com