In their quest to build better, smarter and faster machines, researchers are looking to human biology for inspiration. As has been clear for some time, anthropomorphic robot designs cannot be expected to do the work of a person or replace human rescue workers if they are composed of gears, pullies, and hydraulics. Not only would they be too slow, but they would be prone to breakage.

In their quest to build better, smarter and faster machines, researchers are looking to human biology for inspiration. As has been clear for some time, anthropomorphic robot designs cannot be expected to do the work of a person or replace human rescue workers if they are composed of gears, pullies, and hydraulics. Not only would they be too slow, but they would be prone to breakage.

Because of this, researchers have been working looking to create artificial muscles, synthetics tissues that respond to electrical stimuli, are flexible, and able to carry several times their own weight – just like the real thing. Such muscles will not only give robots the ability to move and perform tasks with the same ambulatory range as a human, they are likely to be far stronger than the flesh and blood variety.

And of late, there have been two key developments on this front which may make this vision come true. The first comes from the US Department of Energy ’s Lawrence Berkeley National Laboratory, where a team of researchers have demonstrated a new type of robotic muscle that is 1,000 times more powerful than that of a human’s, and has the ability to catapult an item 50 times its own weight.

And of late, there have been two key developments on this front which may make this vision come true. The first comes from the US Department of Energy ’s Lawrence Berkeley National Laboratory, where a team of researchers have demonstrated a new type of robotic muscle that is 1,000 times more powerful than that of a human’s, and has the ability to catapult an item 50 times its own weight.

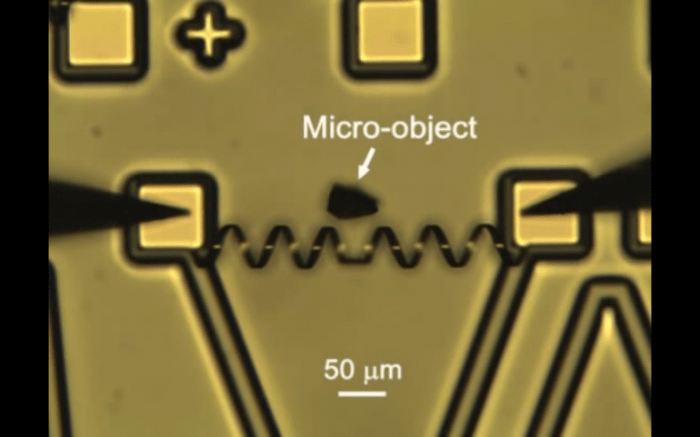

The artificial muscle was constructed using vanadium dioxide, a material known for its ability to rapidly change size and shape. Combined with chromium and fashioned with a silicone substrate, the team formed a V-shaped ribbon which formed a coil when released from the substrate. The coil when heated turned into a micro-catapult with the ability to hurl objects – in this case, a proximity sensor.

Vanadium dioxide boasts several useful qualities for creating miniaturized artificial muscles and motors. An insulator at low temperatures, it abruptly becomes a conductor at 67° Celsius (152.6° F), a quality which makes it an energy efficient option for electronic devices. In addition, the vanadium dioxide crystals undergo a change in their physical form when warmed, contracting along one dimension while expanding along the other two.

Vanadium dioxide boasts several useful qualities for creating miniaturized artificial muscles and motors. An insulator at low temperatures, it abruptly becomes a conductor at 67° Celsius (152.6° F), a quality which makes it an energy efficient option for electronic devices. In addition, the vanadium dioxide crystals undergo a change in their physical form when warmed, contracting along one dimension while expanding along the other two.

Junqiao Wu, the team’s project leader, had this to say about their invention in a press statement:

Using a simple design and inorganic materials, we achieve superior performance in power density and speed over the motors and actuators now used in integrated micro-systems… With its combination of power and multi-functionality, our micro-muscle shows great potential for applications that require a high level of functionality integration in a small space.

In short, the concept is a big improvement over existing gears and motors that are currently employed in electronic systems. However, since it is on the scale of nanometers, it’s not exactly Terminator-compliant. However, it does provide some very interesting possibilities for machines of the future, especially where the functionality of micro-systems are concerned.

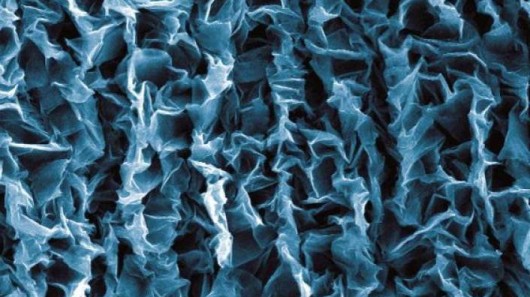

Another development with the potential to create robotic muscles comes from Duke University, where a team of engineers have found a possible way to turn graphene into a stretchable, retractable material. For years now, the miracle properties of graphene have made it an attractive option for batteries, circuits, capacitors, and transistors.

Another development with the potential to create robotic muscles comes from Duke University, where a team of engineers have found a possible way to turn graphene into a stretchable, retractable material. For years now, the miracle properties of graphene have made it an attractive option for batteries, circuits, capacitors, and transistors.

However, graphene’s tendency to stick together once crumpled has had a somewhat limiting effect on its applications. But by attacking the material to a stretchy polymer film, the Duke researchers were able to crumple and then unfold the material, resulting in a properties that lend it to a broader range of applications- including artificial muscles.

Before adhering the graphene to the rubber film, the researchers first pre-stretched the film to multiple times its original size. The graphene was then attached and, as the rubber film relaxed, the graphene layer compressed and crumpled, forming a pattern where tiny sections were detached. It was this pattern that allowed the graphene to “unfold” when the rubber layer was stretched out again.

Before adhering the graphene to the rubber film, the researchers first pre-stretched the film to multiple times its original size. The graphene was then attached and, as the rubber film relaxed, the graphene layer compressed and crumpled, forming a pattern where tiny sections were detached. It was this pattern that allowed the graphene to “unfold” when the rubber layer was stretched out again.

The researchers say that by crumpling and stretching, it is possible to tune the graphene from being opaque to transparent, and different polymer films can result in different properties. These include a “soft” material that acts like an artificial muscle. When electricity is applied, the material expands, and when the electricity is cut off, it contracts; the degree of which depends on the amount of voltage used.

Xuanhe Zhao, an Assistant Professor at the Pratt School of Engineering, explained the implications of this discovery:

Xuanhe Zhao, an Assistant Professor at the Pratt School of Engineering, explained the implications of this discovery:

New artificial muscles are enabling diverse technologies ranging from robotics and drug delivery to energy harvesting and storage. In particular, they promise to greatly improve the quality of life for millions of disabled people by providing affordable devices such as lightweight prostheses and full-page Braille displays.

Currently, artificial muscles in robots are mostly of the pneumatic variety, relying on pressurized air to function. However, few robots use them because they can’t be controlled as precisely as electric motors. It’s possible then, that future robots may use this new rubberized graphene and other carbon-based alternatives as a kind of muscle tissue that would more closely replicate their biological counterparts.

This would not only would this be a boon for robotics, but (as Zhao notes) for amputees and prosthetics as well. Already, bionic devices are restoring ability and even sensation to accident victims, veterans and people who suffer from physical disabilities. By incorporating carbon-based, piezoelectric muscles, these prosthetics could function just like the real thing, but with greater strength and carrying capacity.

This would not only would this be a boon for robotics, but (as Zhao notes) for amputees and prosthetics as well. Already, bionic devices are restoring ability and even sensation to accident victims, veterans and people who suffer from physical disabilities. By incorporating carbon-based, piezoelectric muscles, these prosthetics could function just like the real thing, but with greater strength and carrying capacity.

And of course, there is the potential for cybernetic enhancement, at least in the long-term. As soon as such technology becomes commercially available, even affordable, people will have the option of swapping out their regular flesh and blood muscles for something a little more “sophisticated” and high-performance. So in addition to killer robots, we might want to keep an eye out for deranged cyborg people!

And be sure to check out this video from the Berkley Lab showing the vanadium dioxide muscle in action:

Source: gizmag.com, (2), extremetech.com, pratt.duke.edu